Multi-objective hyperparameter optimization is now routine in many application settings, where practitioners must balance predictive performance against competing objectives such as inference time, model size, or energy consumption. Despite this, most hyperparameter optimization methods for multi-objective problems still treat the hyperparameter space as static: all dimensions are assumed to be equally relevant throughout the search, regardless of the trade-off being explored.

In practice, this is inefficient. Some hyperparameters matter a lot for certain objective trade-offs, while others barely matter at all1. In Dynamic Hyperparameter Importance for Efficient Multi-Objective Optimization, our central claim is: if only a subset of hyperparameters substantially influences a given scalarization of the objectives, then restricting the optimization to those dimensions should improve sample efficiency.

Why Importance Should Be Dynamic

Hyperparameter importance methods are typically used after optimization to interpret results or to guide manual tuning. But in a multi-objective setting, importance is inherently dependent on the objective. For example, a hyperparameter that strongly affects accuracy may be almost irrelevant when optimizing for inference time or energy. Ignoring this leads to wasted evaluations in high-dimensional search spaces. We argue that importance should guide the search itself, not just explain it afterward.

HPI-ParEGO in a Nutshell

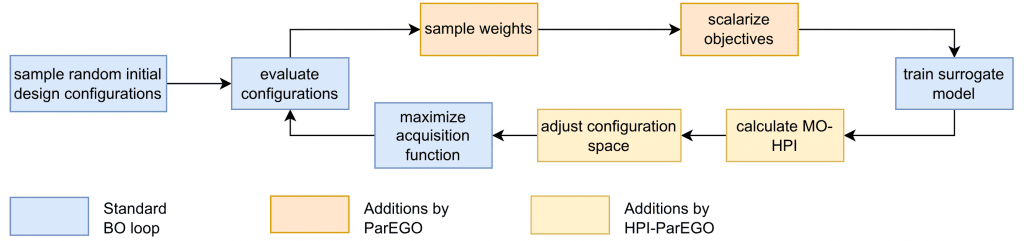

Our paper extends ParEGO2, a popular scalarization-based multi-objective optimizer, which converts a multi-objective problem into a sequence of scalar optimization problems via random weight vectors. HPI-ParEGO adds dynamic hyperparameter importance.

For each ParEGO loop:

- Sample weights and scalarize the objectives with them.

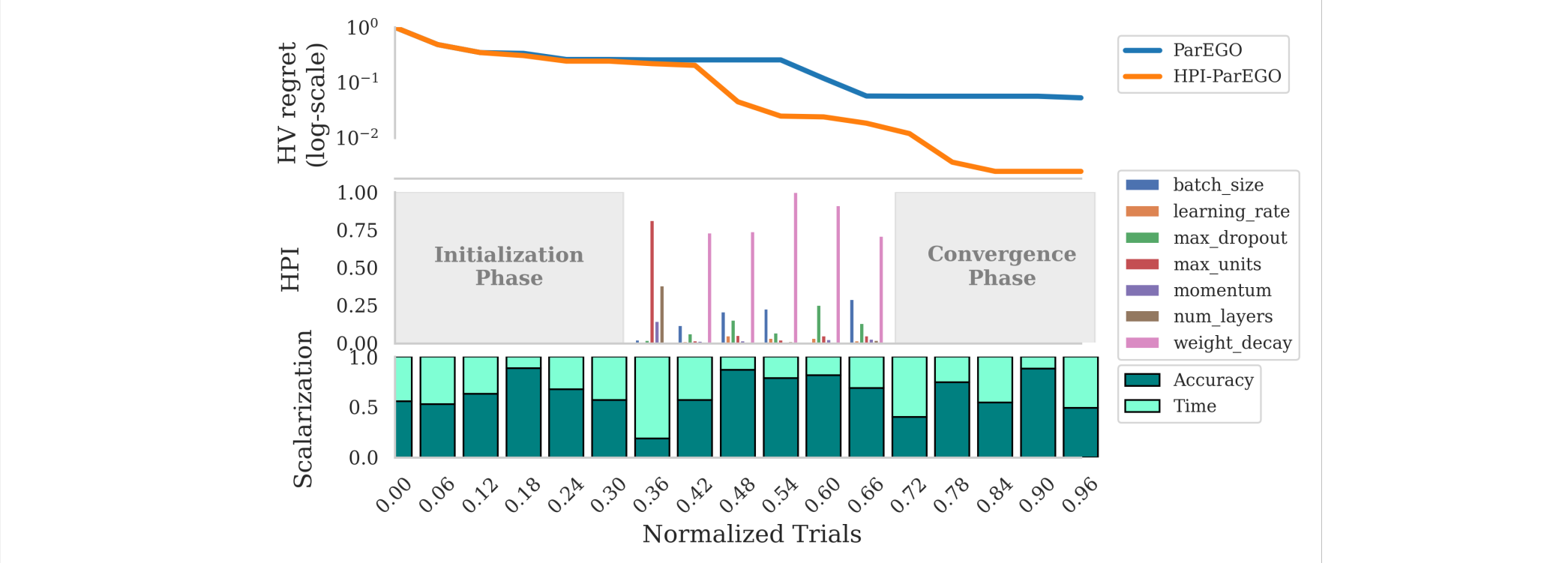

- Estimate hyperparameter importance using HyperSHAP3 with respect to the current scalarized objective.

- Only the most important hyperparameters are actively optimized. Less important ones are temporarily fixed to their current incumbent values.

The set of optimized hyperparameters changes over time as objectives and trade-offs change. So, no hyperparameter is permanently removed. This reflects the empirical observation that different regions of the Pareto front may depend on different subsets of hyperparameters. Early in the search, the method explores broadly. Later, it focuses on what matters most, before fine-tuning solutions.

What is the Gain?

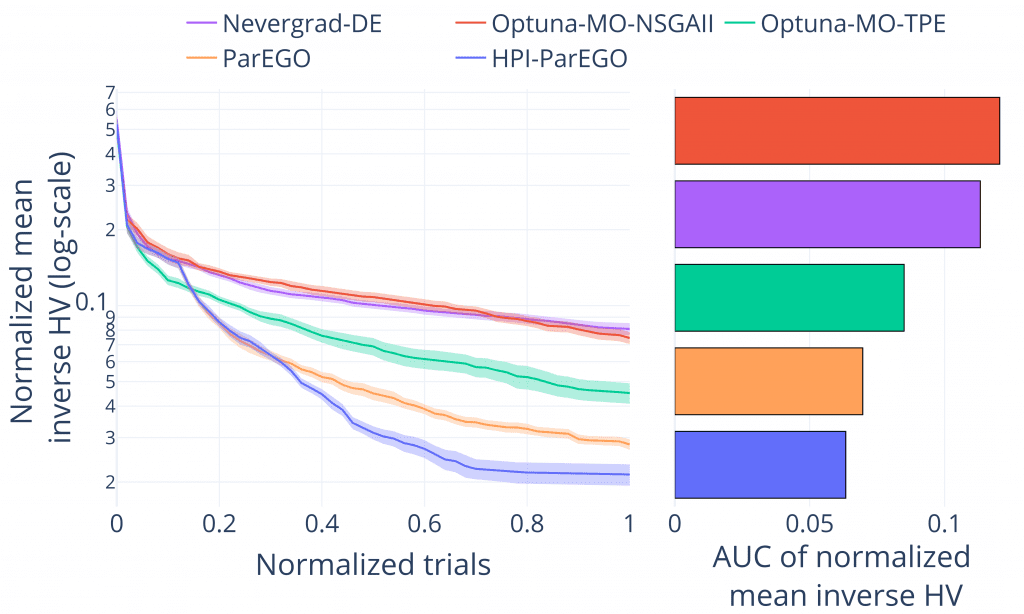

On both synthetic benchmarks from PyMOO4 and realistic AutoML tasks from YAHPO-Gym5, the method almost always:

- Converges faster than the baseline ParEGO and other common multi-objective optimizers.

- Produces better Pareto fronts — meaning more hypervolume being covered.

- Especially useful when only a few hyperparameters actually matter for specific trade-offs.

Why This Is Interesting for AutoML

- This work turns hyperparameter importance from a passive analysis tool into an active optimization signal.

- It highlights that multi-objective optimization implicitly defines multiple learning problems, and the hyperparameters that matter for one may be largely irrelevant for another.

- Empirical results with HPI-ParEGO suggest that adaptive, importance-aware search spaces can improve efficiency, particularly in high-dimensional problems with structured relevance.

- More generally, the paper provides a clear, principled example of how interpretability concepts can be integrated into optimization in an effective way.

References

- D. Theodorakopoulos, F. Stahl, and M. Lindauer. Hyperparameter importance analysis for multi-objective automl. In Proc. of ECAI’24, pages 1100–1107, 2024. ↩︎

- J. D. Knowles. ParEGO: a hybrid algorithm with on-line landscape approximation for expensive multiobjective optimization problems. IEEE Transactions on Evolutionary Computation, 10(1):50–66, 2006. ↩︎

- M. Wever, M. Muschalik, F. Fumagalli, and M. Lindauer. Hypershap: Shapley values and interactions for hyperparameter importance. In Proc. of AAAI’26, 2026. ↩︎

- J. Blank and K. Deb. pymoo: Multi-objective optimization in python. IEEE Access, 8:89497–89509, 2020. ↩︎

- F. Pfisterer, L. Schneider, J. Moosbauer, M. Binder, and B. Bischl. YAHPO Gym – an efficient multi-objective multi-fidelity benchmark for hyperparameter optimization. In Proc. of AutoML Conf’22. PMLR, 2022. ↩︎

Comments are closed