The softmax attention models have become a keystone in modern large language models (LLMs). However, the […]

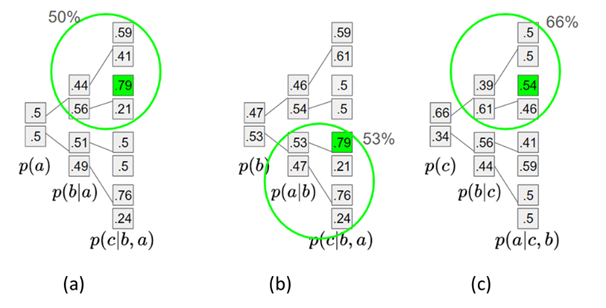

Neural Architecture Search (NAS) has achieved great success by searching for optimal architectures for specific tasks by […]

Submitted by Mehraveh Javan Roshtkhari, Matthew Toews and Marco Pedersoli as part of the AutoML’25 conference The […]